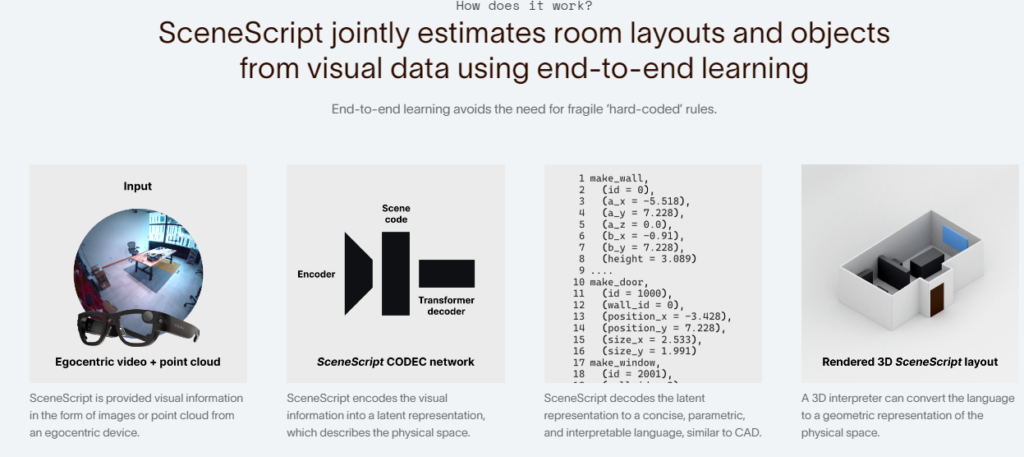

SceneScript, a method that directly produces full scene models as a sequence of structured language commands using an autoregressive, token-based approach. Our proposed scene representation is inspired by recent successes in transformers & LLMs, and departs from more traditional methods which commonly describe scenes as meshes, voxel grids, point clouds or radiance fields.

This method infers the set of structured language commands directly from encoded visual data using a scene language encoder-decoder architecture. To train SceneScript, we generate and release a large-scale synthetic dataset called Aria Synthetic Environments consisting of 100k high-quality indoor scenes, with photorealistic and ground-truth annotated renders of egocentric scene walkthroughs.

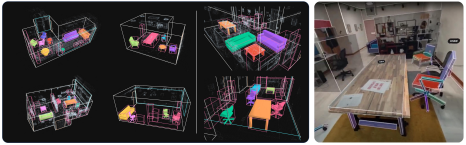

This method gives state-of-the art results in architectural layout estimation, and competitive results in 3D object detection. Lastly, we explore an advantage for SceneScript, which is the ability to readily adapt to new commands via simple additions to the structured language, which we illustrate for tasks such as coarse 3D object part reconstruction.

SceneScript allows AR & AI devices to understand the geometry of physical spaces.

Meta says the system leverages the same underlying technique as large language models (LLMs) except instead of predicting the next language fragment it predicts architectural and furniture elements within a 3D point cloud capture of the same kind that headsets already capture to enable standalone positional tracking.

The output is a series of primitive 3D shapes that represent the basic boundaries of the given furniture or element.

This new technique use the depth sensor, along with cameras, to make a complete map of the room, including automatically mapped furniture and other architectural features.

https://ai.meta.com/blog/scenescript-3d-scene-reconstruction-reality-labs-research/