Real-time Human Pose Estimation in the Browser with TensorFlow.js

Posted by: Dan Oved, freelance creative technologist at Google Creative Lab, graduate student at ITP, NYU. Editing and illustrations: Irene Alvarado, creative technologist and Alexis Gallo, freelance graphic designer, at Google Creative Lab.

UPDATE: PoseNet 2.0 has been released with improved accuracy (based on ResNet50), new API, weight quantization, and support for different image sizes. With defaIt runs at 10 fps on a 2018 13-inch MacBook Pro. See the Github README for more details.

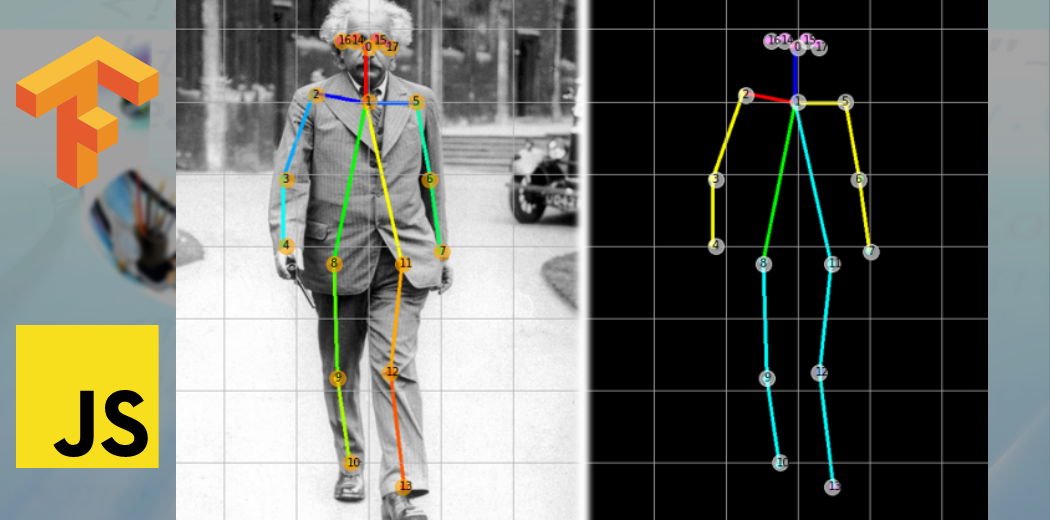

In collaboration with Google Creative Lab, I’m excited to announce the release of a TensorFlow.js version of PoseNet, a machine learning model which allows for real-time human pose estimation in the browser. Try a live demo here.

|

| PoseNet can detect human figures in images and videos using either a single-pose algorithm… |

So what is pose estimation anyway? Pose estimation refers to computer vision techniques that detect human figures in images and video, so that one could determine, for example, where someone’s elbow shows up in an image. To be clear, this technology is not recognizing who is in an image — there is no personal identifiable information associated to pose detection. The algorithm is simply estimating where key body joints are.

|

| … or multi-pose algorithm — all from within the browser. |

Ok, and why is this exciting to begin with? Pose estimation has many uses, from interactive installations that react to the body to augmented reality, animation, fitness uses, and more. We hope the accessibility of this model inspires more developers and makers to experiment and apply pose detection to their own unique projects. While many alternate pose detection systems have been open-sourced, all require specialized hardware and/or cameras, as well as quite a bit of system setup.

With PoseNet running on TensorFlow.js anyone with a decent webcam-equipped desktop or phone can experience this technology right from within a web browser. And since we’ve open sourced the model, Javascript developers can tinker and use this technology with just a few lines of code. What’s more, this can actually help preserve user privacy. Since PoseNet on TensorFlow.js runs in the browser, no pose data ever leaves a user’s computer.

Before we dig into the details of how to use this model, a shoutout to all the folks who made this project possible: George Papandreou and Tyler Zhu, Google researchers behind the papers Towards Accurate Multi-person Pose Estimation in the Wild and PersonLab: Person Pose Estimation and Instance Segmentation with a Bottom-Up, Part-Based, Geometric Embedding Model, and Nikhil Thorat and Daniel Smilkov, engineers on the Google Brain team behind the TensorFlow.js library.

Getting Started with PoseNet

PoseNet can be used to estimate either a single pose or multiple poses, meaning there is a version of the algorithm that can detect only one person in an image/video and one version that can detect multiple persons in an image/video. Why are there two versions? The single person pose detector is faster and simpler but requires only one subject present in the image (more on that later). We cover the single-pose one first because it’s easier to follow.

At a high level pose estimation happens in two phases:

- An input RGB image is fed through a convolutional neural network.

- Either a single-pose or multi-pose decoding algorithm is used to decode poses, pose confidence scores, keypoint positions, and keypoint confidence scores from the model outputs.

But wait what do all these keywords mean? Let’s review the most important ones:

- Pose — at the highest level, PoseNet will return a pose object that contains a list of keypoints and an instance-level confidence score for each detected person.

|

| PoseNet returns confidence values for each person detected as well as each pose keypoint detected. Image Credit: “Microsoft Coco: Common Objects in Context Dataset”, https://cocodataset.org |

- Pose confidence score — this determines the overall confidence in the estimation of a pose. It ranges between 0.0 and 1.0. It can be used to hide poses that are not deemed strong enough.

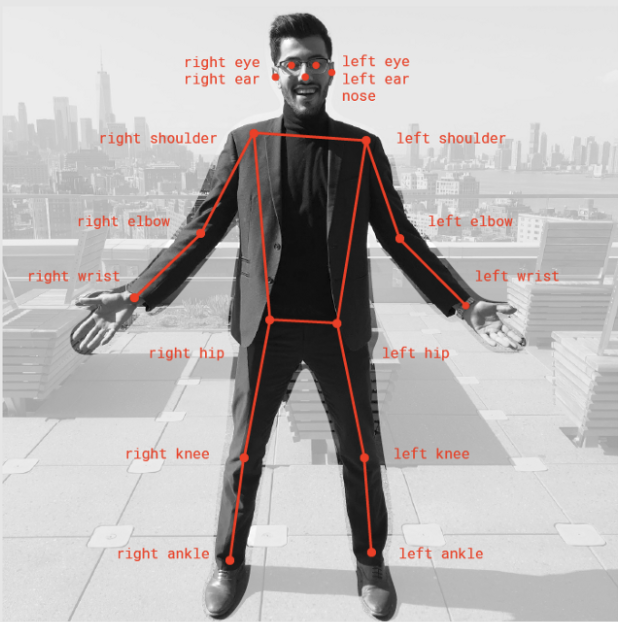

- Keypoint — a part of a person’s pose that is estimated, such as the nose, right ear, left knee, right foot, etc. It contains both a position and a keypoint confidence score. PoseNet currently detects 17 keypoints illustrated in the following diagram:

|

| Seventeen pose keypoints detected by PoseNet. |

- Keypoint Confidence Score — this determines the confidence that an estimated keypoint position is accurate. It ranges between 0.0 and 1.0. It can be used to hide keypoints that are not deemed strong enough.

- Keypoint Position — 2D x and y coordinates in the original input image where a keypoint has been detected.

Part 1: Importing the TensorFlow.js and PoseNet Libraries

A lot of work went into abstracting away the complexities of the model and encapsulating functionality into easy-to-use methods. Let’s go over the basics of how to setup a PoseNet project.

The library can be installed with npm:

npm install @tensorflow-models/posenet

and imported using es6 modules:

import * as posenet from '@tensorflow-models/posenet';

const net = await posenet.load();

or via a bundle in the page:

<html>

<body>

<!-- Load TensorFlow.js -->

<script src="https://unpkg.com/@tensorflow/tfjs"></script>

<!-- Load Posenet -->

<script src="https://unpkg.com/@tensorflow-models/posenet">

</script>

<script type="text/javascript">

posenet.load().then(function(net) {

// posenet model loaded

});

</script>

</body>

</html>

Part 2a: Single-person Pose Estimation

|

| Example single-person pose estimation algorithm applied to an image. Image Credit: “Microsoft Coco: Common Objects in Context Dataset”, https://cocodataset.org |

As stated before, the single-pose estimation algorithm is the simpler and faster of the two. Its ideal use case is for when there is only one person centered in an input image or video. The disadvantage is that if there are multiple persons in an image, keypoints from both persons will likely be estimated as being part of the same single pose — meaning, for example, that person #1’s left arm and person #2’s right knee might be conflated by the algorithm as belonging to the same pose. If there is any likelihood that the input images will contain multiple persons, the multi-pose estimation algorithm should be used instead.

Let’s review the inputs for the single-pose estimation algorithm:

- Input image element — An html element that contains an image to predict poses for, such as a video or image tag. Importantly, the image or video element fed in should be square.

- Image scale factor — A number between 0.2 and 1. Defaults to 0.50. What to scale the image by before feeding it through the network. Set this number lower to scale down the image and increase the speed when feeding through the network at the cost of accuracy.

- Flip horizontal — Defaults to false. If the poses should be flipped/mirrored horizontally. This should be set to true for videos where the video is by default flipped horizontally (i.e. a webcam), and you want the poses to be returned in the proper orientation.

- Output stride — Must be 32, 16, or 8. Defaults to 16. Internally, this parameter affects the height and width of the layers in the neural network. At a high level, it affects the accuracy and speed of the pose estimation. The lower the value of the output stride the higher the accuracy but slower the speed, the higher the value the faster the speed but lower the accuracy. The best way to see the effect of the output stride on output quality is to play with the single-pose estimation demo.

Now let’s review the outputs for the single-pose estimation algorithm:

- A pose, containing both a pose confidence score and an array of 17 keypoints.

- Each keypoint contains a keypoint position and a keypoint confidence score. Again, all the keypoint positions have x and y coordinates in the input image space, and can be mapped directly onto the image.

This short code block shows how to use the single-pose estimation algorithm:

const imageScaleFactor = 0.50;

const flipHorizontal = false;

const outputStride = 16;

const imageElement = document.getElementById('cat');

// load the posenet model

const net = await posenet.load();

const pose = await net.estimateSinglePose(imageElement, scaleFactor, flipHorizontal, outputStride);

An example output pose looks like the following:

{

"score": 0.32371445304906,

"keypoints": [

{ // nose

"position": {

"x": 301.42237830162,

"y": 177.69162777066

},

"score": 0.99799561500549

},

{ // left eye

"position": {

"x": 326.05302262306,

"y": 122.9596464932

},

"score": 0.99766051769257

},

{ // right eye

"position": {

"x": 258.72196650505,

"y": 127.51624706388

},

"score": 0.99926537275314

},

...

]

}

Part 2b: Multi-person Pose Estimation

|

| Example multi-person pose estimation algorithm applied to an image. Image Credit: “Microsoft Coco: Common Objects in Context Dataset”, https://cocodataset.org |

The multi-person pose estimation algorithm can estimate many poses/persons in an image. It is more complex and slightly slower than the single-pose algorithm, but it has the advantage that if multiple people appear in a picture, their detected keypoints are less likely to be associated with the wrong pose. For that reason, even if the use case is to detect a single person’s pose, this algorithm may be more desirable.

Moreover, an attractive property of this algorithm is that performance is not affected by the number of persons in the input image. Whether there are 15 persons to detect or 5, the computation time will be the same.

Let’s review the inputs:

- Input image element — Same as single-pose estimation

- Image scale factor — Same as single-pose estimation

- Flip horizontal — Same as single-pose estimation

- Output stride — Same as single-pose estimation

- Maximum pose detections — An integer. Defaults to 5. The maximum number of poses to detect.

- Pose confidence score threshold — 0.0 to 1.0. Defaults to 0.5. At a high level, this controls the minimum confidence score of poses that are returned.

- Non-maximum suppression (NMS) radius — A number in pixels. At a high level, this controls the minimum distance between poses that are returned. This value defaults to 20, which is probably fine for most cases. It should be increased/decreased as a way to filter out less accurate poses but only if tweaking the pose confidence score is not good enough.

The best way to see what effect these parameters have is to play with the multi-pose estimation demo.

Let’s review the outputs:

- A promise that resolves with an array of poses.

- Each pose contains the same information as described in the single-person estimation algorithm.

This short code block shows how to use the multi-pose estimation algorithm:

const imageScaleFactor = 0.50;

const flipHorizontal = false;

const outputStride = 16;

// get up to 5 poses

const maxPoseDetections = 5;

// minimum confidence of the root part of a pose

const scoreThreshold = 0.5;

// minimum distance in pixels between the root parts of poses

const nmsRadius = 20;

const imageElement = document.getElementById('cat');

// load posenet

const net = await posenet.load();

const poses = await net.estimateMultiplePoses(

imageElement, imageScaleFactor, flipHorizontal, outputStride,

maxPoseDetections, scoreThreshold, nmsRadius);

An example output array of poses looks like the following:

// array of poses/persons

[

{ // pose #1

"score": 0.42985695206067,

"keypoints": [

{ // nose

"position": {

"x": 126.09371757507,

"y": 97.861720561981

},

"score": 0.99710708856583

},

...

]

},

{ // pose #2

"score": 0.13461434583673,

"keypositions": [

{ // nose

"position": {

"x": 116.58444058895,

"y": 99.772533416748

},

"score": 0.9978438615799

},

...

]

},

...

]

If you’ve read this far, you know enough to get started with the PoseNet demos. This is probably a good stopping point. If you’re curious to know more about the technical details of the model and implementation, we invite you to continue reading below.

For Curious Minds: A Technical Deep Dive

In this section, we’ll go into a little more technical detail regarding the single-pose estimation algorithm. At a high level, the process looks like this:

|

| Single person pose detector pipeline using PoseNet |

One important detail to note is that the researchers trained both a ResNet and a MobileNet model of PoseNet. While the ResNet model has a higher accuracy, its large size and many layers would make the page load time and inference time less-than-ideal for any real-time applications. We went with the MobileNet model as it’s designed to run on mobile devices.

Revisiting the Single-pose Estimation Algorithm

Processing Model Inputs: an Explanation of Output Strides

First we’ll cover how to obtain the PoseNet model outputs (mainly heatmaps and offset vectors) by discussing output strides.

Conveniently, the PoseNet model is image size invariant, which means it can predict pose positions in the same scale as the original image regardless of whether the image is downscaled. This means PoseNet can be configured to have a higher accuracy at the expense of performance by setting the output stride we’ve referred to above at runtime.

The output stride determines how much we’re scaling down the output relative to the input image size. It affects the size of the layers and the model outputs. The higher the output stride, the smaller the resolution of layers in the network and the outputs, and correspondingly their accuracy. In this implementation, the output stride can have values of 8, 16, or 32. In other words, an output stride of 32 will result in the fastest performance but lowest accuracy, while 8 will result in the highest accuracy but slowest performance. We recommend starting with 16.

|

| The output stride determines how much we’re scaling down the output relative to the input image size. A higher output stride is faster but results in lower accuracy. |

Underneath the hood, when the output stride is set to 8 or 16, the amount of input striding in the layers is reduced to create a larger output resolution. Atrous convolution is then used to enable the convolution filters in the subsequent layers to have a wider field of view (atrous convolution is not applied when the output stride is 32). While Tensorflow supported atrous convolution, TensorFlow.js did not, so we added a PR to include this.

Model Outputs: Heatmaps and Offset Vectors

When PoseNet processes an image, what is in fact returned is a heatmap along with offset vectors that can be decoded to find high confidence areas in the image that correspond to pose keypoints. We’ll go into what each of these mean in a minute, but for now the illustration below captures at a high-level how each of the pose keypoints is associated to one heatmap tensor and an offset vector tensor.

|

| Each of the 17 pose keypoints returned by PoseNet is associated to one heatmap tensor and one offset vector tensor used to determine the exact location of the keypoint. |

Both of these outputs are 3D tensors with a height and width that we’ll refer to as the resolution. The resolution is determined by both the input image size and the output stride according to this formula:

Resolution = ((InputImageSize - 1) / OutputStride) + 1

// Example: an input image with a width of 225 pixels and an output

// stride of 16 results in an output resolution of 15

// 15 = ((225 - 1) / 16) + 1

Heatmaps

Each heatmap is a 3D tensor of size resolution x resolution x 17, since 17 is the number of keypoints detected by PoseNet. For example, with an image size of 225 and output stride of 16, this would be 15x15x17. Each slice in the third dimension (of 17) corresponds to the heatmap for a specific keypoint. Each position in that heatmap has a confidence score, which is the probability that a part of that keypoint type exists in that position. It can be thought of as the original image being broken up into a 15×15 grid, where the heatmap scores provide a classification of how likely each keypoint exists in each grid square.

Offset Vectors

Each offset vector is a 3D tensor of size resolution x resolution x 34, where 34 is the number of keypoints * 2. With an image size of 225 and output stride of 16, this would be 15x15x34. Since heatmaps are an approximation of where the keypoints are, the offset vectors correspond in location to the heatmap points, and are used to predict the exact location of the keypoints as by traveling along the vector from the corresponding heatmap point. The first 17 slices of the offset vector contain the x of the vector and the last 17 the y. The offset vector sizes are in the same scale as the original image.

Estimating Poses from the Outputs of the Model

After the image is fed through the model, we perform a few calculations to estimate the pose from the outputs. The single-pose estimation algorithm for example returns a pose confidence score which itself contains an array of keypoints (indexed by part ID) each with a confidence score and x, y position.

To get the keypoints of the pose:

- A sigmoid activation is done on the heatmap to get the scores.

- scores = heatmap.sigmoid()

- argmax2d is done on the keypoint confidence scores to get the x and y index in the heatmap with the highest score for each part, which is essentially where the part is most likely to exist. This produces a tensor of size 17×2, with each row being the y and x index in the heatmap with the highest score for each part.

- heatmapPositions = scores.argmax(y, x)

- The offset vector for each part is retrieved by getting the x and y from the offsets corresponding to the x and y index in the heatmap for that part. This produces a tensor of size 17×2, with each row being the offset vector for the corresponding keypoint. For example, for the part at index k, when the heatmap position is y and d, the offset vector is:

- offsetVector = [offsets.get(y, x, k), offsets.get(y, x, 17 + k)]

- To get the keypoint, each part’s heatmap x and y are multiplied by the output stride then added to their corresponding offset vector, which is in the same scale as the original image.

- keypointPositions = heatmapPositions * outputStride + offsetVectors

- Finally, each keypoint confidence score is the confidence score of its heatmap position. The pose confidence score is the mean of the scores of the keypoints.

Multi-person Pose Estimation

The details of the multi-pose estimation algorithm are outside of the scope of this post. Mainly, that algorithm differs in that it uses a greedy process to group keypoints into poses by following displacement vectors along a part-based graph. Specifically, it uses the fast greedy decoding algorithm from the research paper PersonLab: Person Pose Estimation and Instance Segmentation with a Bottom-Up, Part-Based, Geometric Embedding Model. For more information on the multi-pose algorithm please read the full research paper or look at the code.

It’s our hope that as more models are ported to TensorFlow.js, the world of machine learning becomes more accessible, welcoming, and fun to new coders and makers. PoseNet on TensorFlow.js is a small attempt at making that possible

Detection of human activity and movement by applying posture recognition to video feeds. AI models to detect the following posture states: Laying down, sitting, walking, and standing. Track changes in human postures to determine specific events (standing up, seating down, falling down) Determine time between specific posture-changing events.

Introduction

Deep learning is a subset of Machine Learning and Artificial Intelligence that imitates the way humans gain certain types of knowledge. It is essentially a neural network with three or more layers. deep-learning helps to solve many artificial intelligence applications that help improving automation, performing analytical and physical tasks without human intervention, thus creates disruptive applications and techniques. One such application is Human Pose detection where deep learning takes its place.

What you’ll Learn

- What is PoseNet?

- How does PoseNet Works?

- Applications of Posture Detection in real-time

- Implementing Posture Detection using PoseNet

- Prerequisite points to remember

- Code complete Project from scratch

- Deploy on GitHub

- End Notes

What is PoseNet?

Posenet is a real-time pose detection technique with which you can detect human beings’ poses in Image or Video. It works in both cases as single-mode(single human pose detection) and multi-pose detection(Multiple humans pose detection). In simple words, Posenet is a deep learning TensorFlow model that allows you o estimate human pose by detecting body parts such as elbows, hips, wrists, knees, ankles, and form a skeleton structure of your pose by joining these points.

How does PoseNet work?

PoseNet is trained in MobileNet Architecture. MobileNet is a Convolutional neural network developed by google which is trained on the ImageNet dataset, majorly used for Image classification in categories and target estimation. It is a lightweight model which uses depthwise separable convolution to deepen the network and reduce parameters, computation cost, and increased accuracy. There are tons of articles related to MobileNet that you can find on google.

The pre-trained models run in our browsers, that is what differentiates posenet from other API-dependent libraries. Hence, anyone with a limited configuration in a laptop/desktop can easily make use of such models and built good projects.

Posenet gives us a total of 17 key points which we can use, right from our eye to and ears to knees and ankles.

Image 1

If the Image we give to Posenet is not clear the posenet displays a confidence score of how much it is confident in detecting a particular pose in form of JSON response.

Applications of PoseNet in the Real-world used by organizations

1) Used in Snapchat filters where you see the tongue, aspects, glimpse, dummy faces.

2) Fitness apps like a cult which uses to detect your exercise poses.

3) A very popular Instagram Reels uses posture detection to provide you different features to apply on your face and surrounding.

4) Virtual Games to analyze shots of players.

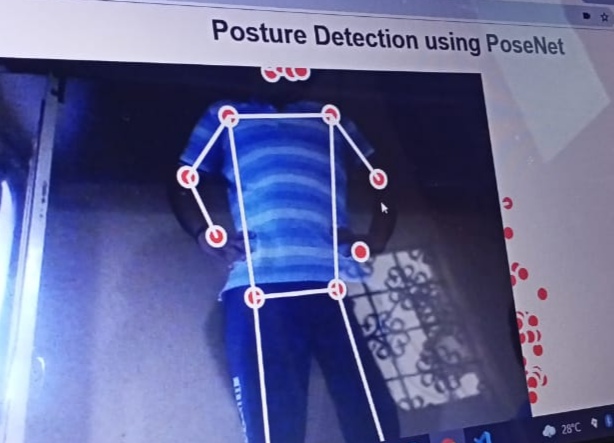

Implementing Posture Detection using PoseNet

Now we have a theoretical knowledge of the posenet and why it is used. let’s jump right into the coding environment and implement the Pose detection project.

How we will Implement Project

We will not be following the Python way of implementing this project rather we will be going with javascript because we have to do all this work in the browser, and implementing Python in the browser is nearly impossible. you can run Python on the server. Tensorflow is having a popular library name tensorflow.js that provides a feature of a running model on a client system.

If you haven’t read or know machine learning with javascript then no need to worry. It is too simple to follow and I will make sure everything is crystal clear to everyone. Indeed there is no much code to write in javascript, only a few lines of code.

let’s get started

You can use any IDE to implement the project like Visual studio code, sublime text, etc.

1) Boiler Template

Create a new folder and create one HTML file which will work as our website to users. here only we will import our javascript file, Machine learning, and deep learning libraries that we will use.

Posture Detection using PoseNet

2) p5.js

It is a javascript library used for creative coding. There is one software known as Processing on the top of which P5.js is based. The Processing was made in java, which helps creative coding in desktop apps but after that when there was a need for the same thing in websites then P5.js was implemented. Creative coding basically means that It helps you to draw various shapes and figures like lines, rectangles, squares, circles, points, etc on the browser in a creative manner(colored or animated) by just calling an inbuilt function, and provide height and width of shape you want.

Create one javascript file, and here we will try to learn P5.JS, and why we are using this library. before writing anything in the javascript file first import P5.js, add a link to a created javascript file in the HTML file.

There are basic 2 things in P5.js that you implement. write the below code in the javascript file.

a) setup – In this function, you write a code that is related to the basic configuration you need in your interface. one thing you create is canvas and specify its size here. And all the things you implement will appear in this canvas only. Its work is to set up all the things.

function setup() { // this function runs only once while running

createCanvas(800, 500);

}

b) Draw – The second function is to draw where you draw all things you want like shapes, place images, play video. all the implementation code placed in this function. Understand it as a main function in compiled languages. Its work is to display things on the screen.

let us try drawing some shapes, and take the hands-on experience with the P5.Js library. The best thing is for each figure there is an inbuilt function, and you only need to call and pass some coordinates to draw a shape. to give background colour to canvas call background function and pass colour code.

i) Point – to draw a simple point use point function and pass x and y coordinates

ii) line – line is something which connects two points to only you have to call line function and pass coordinates of 2 points means 4 coordinates.

iii) rectangle – call rect function and pass height and width. If height and width are the same then it will be square.

some other functions used for creativity are.

i) stroke – It defines the outer boundary line of shape

ii) stroke-weight – It defines how much width the outer line should be.

iii) fill – the color you want to fill in the shape

Below is a code snippet as an example for each function we learned. Try this code once and observe changes and figures in a browser by running an HTML file as on a live server.

function draw() {

background(200);

//1.point

point(200, 200);

//2.line

line(200, 200, 300, 300);

//3.trialgle

triangle(100, 200, 300, 400, 150, 250);

//4.rectangle

rect(250, 200, 200, 100);

//5. circle

ellipse(100, 200, 100, 100);

// color circle using stroke and fill

/*

fill(127, 102, 34);

stroke(255, 0, 0);

ellipse(100, 200, 100, 100);

stroke(0, 255, 0);

ellipse(300, 320, 100, 100);

stroke(0, 0, 255);

ellipse(400, 400, 100, 100);

*/

}

An important feature of P5.js is that the setup function runs only one time for setting up the things but the draw function code runs in an infinite loop till the interface is open. You can check this out by printing anything using the console log command. And by using this you can create amazing designs. With P5js you can load images, capture images, video, etc.

function getRandomArbitrary(min, max) { // generate random num

return Math.random() * (max - min) + min;

}

/*

r = getRandomArbitrary(0, 255);

g = getRandomArbitrary(0, 255);

b = getRandomArbitrary(0, 255);

fill(r,g,b);

ellipse(mouseX, mouseY, 50, 50);

*/

Use this above-commented code in the draw function and new function above it and run code, and observe changes on the browser, and experience the magic of the P5.js library.

3) ML5.js

The best way to share code applications with others is the web. Only share URL and you can use other applications on your system. keeping this google implemented tensorflow.js, but working with tensorflow.js requires a deep understanding So, ML5.js build a wrapper around tensorflow.js and made the task simple by using some function so indirectly you will deal with TensorFlow.js through ml5.js. The same you can read on official documentation of Ml5.js

Hence, It is the main library that consists of various deep learning models on which you can build projects. In this project, we are using the PoseNet model which is also present in this library.

let’s import the library, and use it. In the HTML file paste the below script code to load the library.

Now let’s set up the Image capture and load the PoseNet model. the capture variable is a global variable, and all the variables we will be creating have global scope.

let capture;

function setup() { // this function runs only once while running

createCanvas(800, 500);

//console.log("setup function");

capture = createCapture(VIDEO);

capture.hide();

//load the PoseNet model

posenet = ml5.poseNet(capture, modelLOADED);

//detect pose

posenet.on('pose', recievedPoses);

}

function recievedPoses(poses) {

console.log(poses);

if(poses.length > 0) {

singlePose = poses[0].pose;

skeleton = poses[0].skeleton;

}

}

As we load and run the code, so Posenet will detect 17 body points(5 facial points, 12 body points) along with information that at what pixel the point is been detected in an Image. And if you print these poses then it will return an array(python list) that consists of a dictionary with 2 keys as pose and skeleton that we have assessed.

- pose – It is again a dictionary that consists of various keys and a list of values as key points, left eye, left ear, nose, etc.

- skeleton – In skeleton, each dictionary consists of two subdictionaries as zero and one that has a confidence score, part name, and position coordinate. so we can use this to make a line and construct a skeleton structure.

Now if you want to display any single point in front of the pose then you can do it by using these separate points in a pose.

How we will display all the points and connect them as skeletons?

we have a keypoints name dictionary which has X and y coordinate of each point. so we can traverse in keypoints dictionary and access position dictionary in that and use x and y coordinate in that.

Now to draw the line we can use the second dictionary as a skeleton that consists of all points information of coordinate to connect two body parts.

function draw() {

// images and video(webcam)

image(capture, 0, 0);

fill(255, 0, 0);

if(singlePose) { // if someone is captured then only

// Capture all estimated points and draw a circle of 20 radius

for(let i=0; i<singlePose.keypoints.length; i++) {

ellipse(singlePose.keypoints[i].position.x, singlePose.keypoints[i].position.y, 20);

}

stroke(255, 255, 255);

strokeWeight(5);

// construct skeleton structure by joining 2 parts with line

for(let j=0; j<skeleton.length; j++) {

line(skeleton[j][0].position.x, skeleton[j][0].position.y, skeleton[j][1].position.x, skeleton[j][1].position.y);

}

}

}

Be in light, It sometimes does not capture exactly in blur or dark background.

How to impose Images?

Now we will learn how to impose images on the face, or at any other location that you see in different filters. It seems a little bit fuzzy and funny but this application is working as a booster for many social media.

Just load the images in the setup function, and adjust the images using the image function as a coordinate where you want to display that image in the draw function just after the end of the skeleton for a loop. suppose we are displaying specs and cigar images.

specs = loadImage('images/spects.png');

smoke = loadImage('images/cigar.png');

// Apply specs and cigar

image(specs, singlePose.nose.x-40, singlePose.nose.y-70, 125, 125);

image(smoke, singlePose.nose.x-35, singlePose.nose.y+28, 50, 50);

All the images are kept in a separate folder named images, and using the load image function we load each image. specs will be above the nose and cigar below the nose. The complete code link is given below, you can take its reference.

Deploy the Project

As the project is on a browser so you can simply deploy it on Github and make it available for others to use it. Just upload all the files and images to the new repository on Github as they are in your local system. After uploading visit the settings of the repository and visit Github pages. change none to main branch and click save. It will give you the URL of a project which will live after some time and you can share it with others.

Check live demo ~ Posture Detection using PoseNet

Access Code files for reference ~ GitHub

End Notes

Hurray! We have created a complete end-to-end Posture detection project using a pre-trained PoseNet model. I hope that it was easy to catch all the concepts because I can understand if you are seeing Machine learning with javascript first time it can feel a little bit hard. But believe me, it’s a simple thing, and goes through the article once more and try it yourself with different configurations, different designs.

We have worked on a single-person pose detection, I would like to encourage you to work for multiple-person pose detection. And you can try adding different glimpse options, adjust points that work on all cameras. There are many things you can advance on this project.

For more understanding in dipper please visit below references

Tensorflow Blog – Real-time human pose estimation

ML5.js documentation – Official Documentation

Labeling

‘LabelImg‘

LabelImg is a graphical image annotation tool. It is written in Python and uses Qt for its graphical interface. Annotations are saved as XML files in PASCAL VOC format, the format used by ImageNet. Besides, it also supports YOLO and CreateML formats.

https://pypi.org/project/labelImg/

LabelImg is now part of the Label Studio community. The popular image annotation tool created by Tzutalin is no longer actively being developed, but you can check out Label Studio, the open source data labeling tool for images, text, hypertext, audio, video and time-series data. – Releases · heartexlabs/labelImg

https://github.com/heartexlabs/labelImg/releases

Roboflow

Roboflow Annotate Quickly Label Training Data and Export To Any Format

https://blog.roboflow.com/labelimg/

V7 Labeling

https://www.v7labs.com/blog/labelimg-guide

Viso.ai

https://viso.ai/computer-vision/labelimg-for-image-annotation/