Tensor Processing Unit is an AI accelerator application-specific integrated circuit developed by Google specifically for neural network machine learning, particularly using Google’s own TensorFlow software.

Machine learning has produced business and research breakthroughs ranging from network security to medical diagnoses.

Cloud TPU is the custom-designed machine learning ASIC that powers Google products like Translate, Photos, Search, Assistant, and Gmail.

Built for AI on Google Cloud

Cloud TPU is designed to run cutting-edge machine learning models with AI services on Google Cloud. And its custom high-speed network offers over 100 petaflops of performance in a single pod — enough computational power to transform your business or create the next research breakthrough.

Iterate faster on your ML solutions

Training machine learning models is like compiling code: you need to update often, and you want to do so as efficiently as possible. ML models need to be trained over and over as apps are built, deployed, and refined. Cloud TPU’s robust performance and low cost make it ideal for machine learning teams looking to iterate quickly and frequently on their solutions.

Proven, state-of-the-art models

You can build your own machine learning-powered solutions for many real-world use cases. Just bring your data, download a Google-optimized reference model, and start training.

Cloud TPU offering

Cloud TPU v2

180 teraflops64 GB High Bandwidth Memory (HBM)

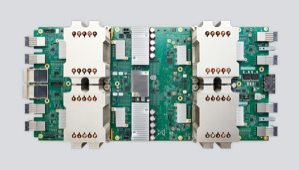

Cloud TPU v3

420 teraflops128 GB HBM

Cloud TPU v2 Pod

11.5 petaflops4 TB HBM2-D toroidal mesh network

Cloud TPU v3 Pod

100+ petaflops32 TB HBM2-D toroidal mesh network

Model library

Get started immediately by leveraging our growing library of optimized models for Cloud TPU. These provide optimized performance, accuracy, and quality in image classification, object detection, language modeling, speech recognition, and more.

Connect Cloud TPUs to custom machine types

You can connect to Cloud TPUs from custom AI Platform Deep Learning VM Image types, which can help you optimally balance processor speeds, memory, and high-performance storage resources for your workloads.

Fully integrated with Google Cloud Platform

At their core, Cloud TPUs and Google Cloud’s data and analytics services are fully integrated with other Google Cloud Platform offerings, like Google Kubernetes Engine (GKE). So when you run machine learning workloads on Cloud TPUs, you benefit from GCP’s industry-leading storage, networking, and data analytics technologies.

Tensor Processing Unit (TPU) 3.0: Google’s answer to cloud-ready Artificial Intelligence

A TPU 3.0 pod is expected to crunch numbers at approximately 100 petaflops, as compared to 11.5 petaflops delivered by TPU 2.0. Pichai did not comment about the precision of the processing in these benchmarks – something which can make a lot of difference in real-world applications.