Unlike conventional self-driving systems, Wayve aims to create a scalable, mapless, and vehicle-agnostic autonomy platform powered by AI foundation models.

Wayve’s approach is based on Embodied AI, where intelligence emerges from interaction with the real world rather than predefined rules.

Key Idea

- AI learns driving like a human:

- Observing

- Interacting

- Adapting

This contrasts with traditional systems that rely on:

- Predefined rules

- HD maps

- Modular pipelines

Capabilities

- Learns from real-world driving data

- Generalizes to new environments

- Handles unpredictable scenarios (“long tail problem”)

Wayve specializes in developing AI foundation models for autonomous driving. Our technology equips vehicles with a ‘robot brain’ that can learn from and interact with real-world environments.

Optimized for safe driving

Embodied AI uses a domain-optimized model architecture that prioritizes automotive safety, resulting in safe and natural driving performance.

Solves the long-tail problem

Embodied AI has superior generalization capabilities, allowing it to applying ‘learned’ driving skills to unexpected scenarios, even without prior training exposure.

Efficient and large-scale learning

Our self-supervised learning method enables efficient, large-scale learning, essential for seamlessly adapting AI capabilities to new vehicles and geographies.

3. AV2.0 Architecture (End-to-End Driving Model)

Wayve defines its architecture as AV2.0, a next-generation autonomous driving stack.

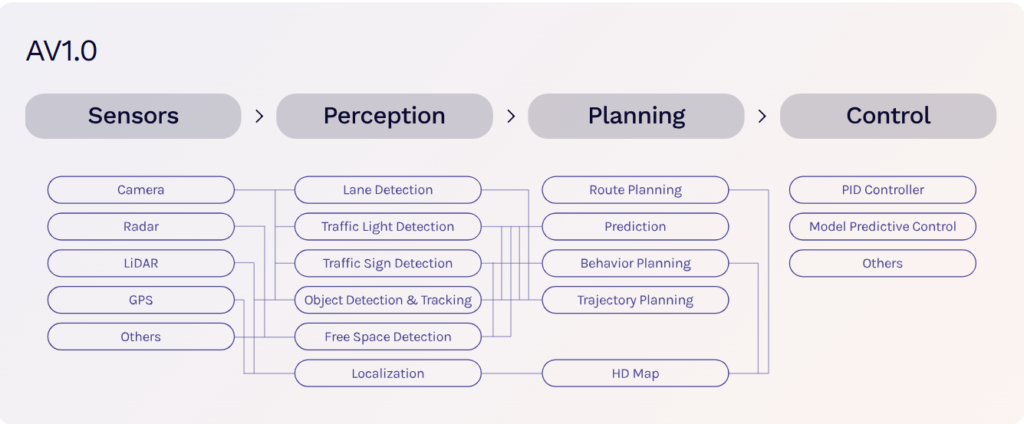

3.1 Traditional AV1.0 (Legacy Approach)

Sensors → Perception → Localization → Planning → Control

- Modular pipeline

- Heavy reliance on:

- LiDAR

- HD maps

- Rule-based logic

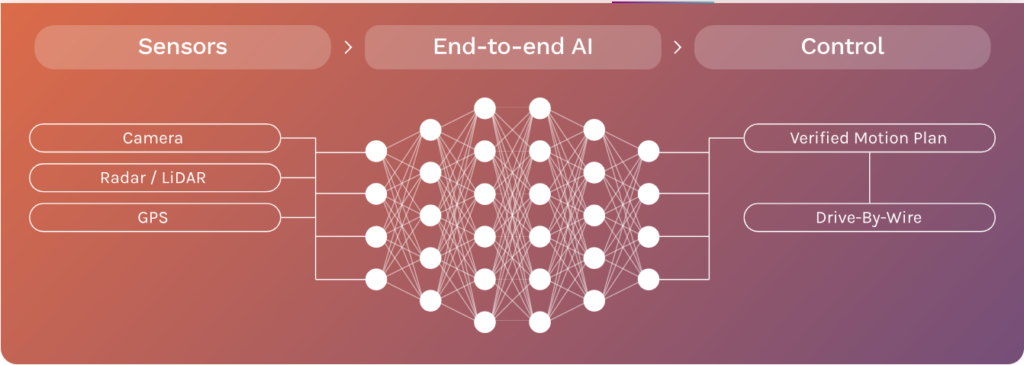

3.2 Wayve AV2.0 (End-to-End AI)

Sensors (camera/radar) → Neural Network → Driving Actions

- Single deep neural network

- Direct mapping:

- Raw sensor input → steering, braking, acceleration

👉 Eliminates intermediate modules

3.3 Key Architectural Innovations

a. End-to-End Learning

- Entire driving task learned jointly

- Reduces system complexity

- Improves adaptability

b. Self-Supervised Learning

- Trains on unlabeled data

- Eliminates costly manual annotation

c. World Models

- AI builds an internal representation of environment

- Predicts:

- Vehicle motion

- Other agents’ behavior

d. Vision-Language-Action Models (e.g., LINGO)

- Combines:

- Visual perception

- Language understanding

- Action planning

4. Mapless Autonomy

Traditional Systems

- Depend on:

- HD maps

- Pre-scanned environments

Wayve Approach

- No HD maps required

- Uses real-time perception + learned behavior

👉 Benefits:

- Faster geographic expansion

- Lower operational cost

- Works in unseen cities

Key Differentiators vs Competitors

| Feature | Wayve | Waymo | Tesla |

|---|---|---|---|

| Architecture | End-to-end AI | Modular + AI | End-to-end hybrid |

| HD Maps | ❌ No | ✅ Yes | ❌ No |

| Sensor Dependence | Flexible | Heavy (LiDAR) | Vision-first |

| Learning Approach | Self-supervised | Supervised + rules | Fleet learning |

| Generalization | High | Moderate | High |